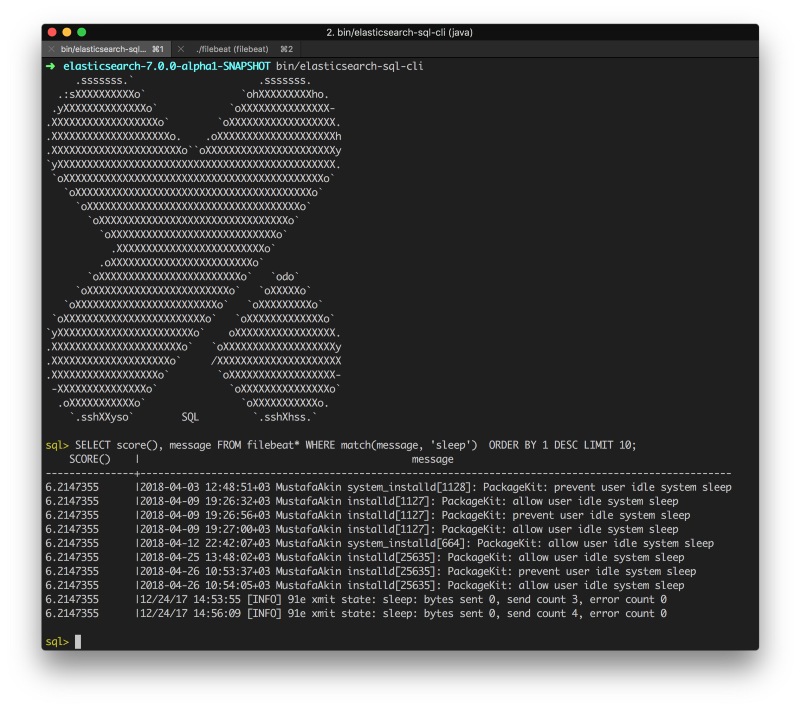

Note that you need to replace /MY_WORKDIR/ by a valid path on your computer for this to work. The docker compose file docker-compose.yml looks like this: We will use the official docker images and there will be a single ElasticSearch node. To make things as simple as possible, we will use docker compose to set them up. There are many ways to install FileBeat, ElasticSearch and Kibana. Although FileBeat is simpler than Logstash, you can still do a lot of things with it. It was created because Logstash requires a JVM and tends to consume a lot of resources. The setup works as shown in the following diagram:ĭocker writes the container logs in files.įileBeat then reads those files and transfer the logs into ElasticSearch.įileBeat is used as a replacement for Logstash. It should be able to decode logs encoded in JSON.

It should be as efficient as possible in terms of resource consumption (cpu and memory).Even after being imported into ElasticSearch, the logs must remain available with the docker logs command.All the docker container logs (available with the docker logs command) must be searchable in the Kibana interface.The setup meets the following requirements: In this article I will describe a simple and minimalist setup to make your docker logs available through Kibana. Regarding how to import the logs into ElasticSearch, there are a lot of possible configurations.īut this is often achieved with the use of Logstash that supports numerous input plugins (such as syslog for example). The Kibana interface let you very easily browse the logs previously stored in ElasticSearch. If you are looking for a self-hosted solution to store, search and analyze your logs, the ELK stack (ElasticSearch, Logstash, Kibana) is definitely a good choice.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed